Generative Engine Optimization (GEO) Audit Tool: Free AI Visibility Checker

Free GEO and AEO audit. Stackra checks your site signals for bot access, schema readiness, entity clarity, and infrastructure, and tells you exactly where your gaps are.

Free to sign up · Results in ~5 minutes

What is GEO?

Generative Engine Optimization refers to making your website appear as a cited source in AI-generated answers from tools like ChatGPT, Perplexity, and Google AI Overviews.

AI bot access

Whether major AI crawlers are permitted to reach and index your site.

Schema readiness

Whether your content has structured data that AI tools can parse and cite.

Entity clarity

Whether AI tools can identify your business name, location, and type.

Supporting signals

Whether your sitemap and robots.txt are reachable and correctly configured.

GEO vs SEO: how they differ

Traditional SEO

Optimizing to rank in a list of links. The goal is a high position in search results. The user clicks your link and lands on your site.

GEO

Optimizing to be cited in an AI-generated answer. The goal is to be the source an AI tool quotes when someone asks a relevant question. The user may never click; your brand is named in the answer.

Both matter. But they require different signals. A site that ranks well in Google may score poorly on GEO readiness if its structured data is thin, its entity information is ambiguous, or its content is locked behind JavaScript interactions that AI fetchers cannot see.

Four signal groups, assessed independently

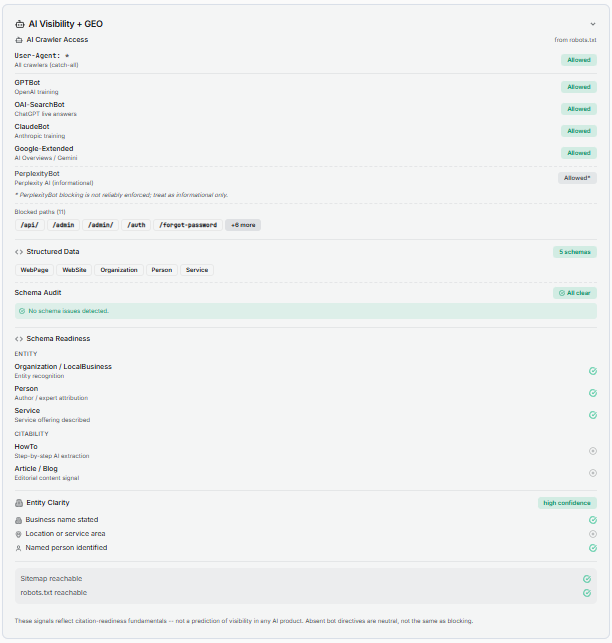

Most audit tools that mention GEO check one thing: whether your robots.txt blocks Googlebot. Stackra checks four distinct groups and reports them separately so you can see exactly where your gaps are.

AI crawler access in robots.txt (GPTBot, ClaudeBot, Google-Extended)

The most foundational GEO signal, and the one that answers "is my site visible to ChatGPT, Claude, and Perplexity?" Stackra reads and parses your robots.txt and checks access for each major AI crawler independently, not just as a pass or fail on the file as a whole.

GPTBot

OpenAI training + ChatGPT Search

OAI-SearchBot

ChatGPT live web retrieval

ClaudeBot

Anthropic / Claude training

Google-Extended

Google AI Overviews + Gemini

PerplexityBot

Perplexity AI

Informational only. Does not reliably honor robots.txt rules. Treat as advisory.

Naming each AI crawler explicitly in your robots.txt is the same effect as the wildcard User-agent: * rule alone. If you are looking to block specific crawlers, naming them is a good practice.

Schema readiness for AI citation (Article, HowTo, FAQPage, Organization)

Schema markup is the structured signal layer that tells AI tools what your content means, not just what it says. Stackra divides schema readiness into two sub-categories with different GEO functions.

Entity schemas

Establish who you are. AI tools use these to place your site in a knowledge graph and associate it with a real-world entity.

Organization

Primary entity signal for any business. Establishes your name, URL, description, and logo for AI knowledge graphs.

LocalBusiness

Extends Organization with address, phone, and hours. Use for any business with a physical location.

Person

For individual practitioners, consultants, and personal brands. Supports author attribution and expertise signals.

Citability schemas

Establish what you publish. These directly influence whether AI tools cite your pages when generating answers about topics your content covers.

Article / BlogPosting

Primary signal for editorial content. Applies to blog posts, guides, and opinion pieces.

HowTo

Highest citability signal for step-by-step instructional content. AI tools prioritize this for process and tutorial pages.

BreadcrumbList

Signals content hierarchy to AI crawlers. Commonly present on well-cited pages. Detected and stored but excluded from the citability count (subpage schema).

FAQPage

Universal AI citation signal. Google dropped all FAQPage rich results in May 2026, so it no longer produces a SERP feature for any site type. ChatGPT, Perplexity, and other generative tools actively use FAQPage content when generating answers, with no equivalent restriction.

Citability count: Stackra reports a citabilityTypeCount: the number of distinct active citability schema types detected. The count covers Article/BlogPosting, HowTo, and FAQPage, giving a range of 0–3. BreadcrumbList is detected and stored but excluded from the count (subpage schema, always absent on a homepage scan).

Entity clarity (Organization, LocalBusiness, Person schema)

Entity clarity answers whether AI tools can confidently identify who runs your site and where you operate. Detection is fully deterministic; no AI call is made. Stackra derives three signals from up to four layers per signal, falling back from the most structured to the most universal: JSON-LD, microdata itemprop values, geo meta tags, and Open Graph / publisher / author meta tags.

Business name

JSON-LD Organization/LocalBusiness name property → microdata itemprop → og:site_name, publisher tag

Location or service area

JSON-LD address, geo, areaServed → microdata address → geo.region, geo.placename meta tags

Named person

JSON-LD Person schema → microdata author itemprop → <meta name='author'>

Why metadata matters as a fallback. JSON-LD is the cleanest entity signal, but a large slice of the live web never adopted it: older WordPress installs without an SEO plugin, hand-coded sites, and hobbyist Squarespace and Wix builds. Stackra's detection mirrors what AI crawlers like Google-Extended and ClaudeBot already do (fall back to plain meta tags when richer markup is absent), so the entity confidence we report matches what those tools actually see.

Meta tags Stackra reads

og:site_nameand thepublishermeta tag: counted toward business name when no Organization schema is present.meta name="author": counted toward named person when no Person schema is present.geo.regionandgeo.placename: standard but underused location tags, counted toward location when no LocalBusiness address is present.

Low

0 signals confirmed

Moderate

1 signal confirmed

High

2+ signals confirmed

Supporting infrastructure signals (sitemap, robots.txt reachability)

Two infrastructure signals that determine whether AI crawlers can discover and fully index your site.

Sitemap reachable

Whether your sitemap.xml is present and returns a valid response. AI crawlers use sitemaps the same way Googlebot does, to discover pages they might not find through link crawling alone. WordPress (5.5+), Wix, Shopify, and Squarespace generate sitemaps automatically; custom-built sites and some older setups require manual generation. Submit yours to Google Search Console.

robots.txt reachable

Whether your robots.txt returns a valid response. A missing or unreachable robots.txt means crawlers fall back to permissive defaults, but it also signals a configuration gap that affects how reliably your access rules are enforced.

Stackra audits Stackra

Stackra's own site is structured against the same GEO signals it checks. When we run stackra.app through the scanner, the expected output is:

All major AI crawlers explicitly allowed in robots.txt (11 named stanzas)

Organization schema on the homepage with name, URL, description, logo, and sameAs to Product Hunt

Person schema on the About page and blog posts (founder as named entity with LinkedIn sameAs)

BlogPosting schema on every article (citabilityTypeCount: 1/2 at article level)

Entity clarity: high confidence (business name + named person confirmed)

Sitemap and robots.txt both reachable and cross-referenced

We also document what we intentionally omit: LocalBusiness schema (Stackra is a SaaS product, not a physical location) and HowTo schema (our blog content is not currently structured in the step-by-step format HowTo requires). FAQPage schema is included on relevant content pages. Google dropped all FAQPage rich results in May 2026, so it no longer produces a SERP feature, but ChatGPT and Perplexity use FAQPage content actively as a citation signal. Accurate schema is better than inflated schema.

What you can actually do, by platform

Your platform determines which GEO signals you can control. None of the following require a developer.

WordPress

Full server-level control. Every GEO signal is addressable through free plugins.

AI bot access

A clean User-agent: * with an empty Disallow: allows all crawlers including AI bots, with no per-bot Allow rules needed. Use explicit bot-specific blocks only if you want to restrict a particular crawler. The most common intentional block is Google-Extended, which opts your content out of Google AI training without affecting standard search indexing.

Organization schema

Rank Math's Knowledge Graph settings output correct JSON-LD automatically from your business name, type, logo, and description. Yoast equivalent is under SEO > Search Appearance > Knowledge Graph & Schema.

Article schema on content pages

Rank Math and Yoast both apply Article or BlogPosting schema to posts automatically. Verify your content type is set correctly in each plugin's schema settings.

JavaScript content visibility

Standard themes render content in initial HTML. If you use Elementor or third-party accordion plugins, verify FAQ sections use native HTML details/summary elements, not JavaScript click-reveal components invisible to AI fetchers.

Wix

Strongest built-in GEO capability of any managed platform. Most signals are configurable without code.

AI bot access

Wix provides a robots.txt editor at Settings > SEO > robots.txt. The default allows all crawlers; no per-bot Allow rules are needed. Only add explicit bot-specific blocks if you want to restrict a particular crawler, such as adding a Google-Extended block to opt out of Google AI training.

Organization/LocalBusiness schema

Wix auto-generates Organization or LocalBusiness schema from your business information. Complete all fields under Settings > Business Info; this feeds the schema automatically. Verify output with Google's Rich Results Test.

Custom schema

Wix's Structured Data Markup tool (Marketing & SEO > SEO Tools > Structured Data) supports HowTo blocks for step-by-step content. No code required.

Content visibility

Native Wix blocks are generally crawler-readable. If you have embedded third-party JavaScript widgets for accordions or tab content, verify the text is present in the page HTML, not injected after load.

Squarespace

AI bots allowed by default. Schema basics are handled by the platform. Custom schema requires code injection.

AI bot access

Squarespace generates robots.txt automatically. The default allows all crawlers including AI bots. No action required unless you have previously customized it.

Organization schema

Squarespace auto-generates basic Organization markup from your site settings. Complete your business name, description, and contact details under Settings > Business Information.

Custom schema

JSON-LD can be injected via Settings > Advanced > Code Injection. For HowTo pages or step-by-step service guides, this is the only way to add instructional content signals.

Content visibility

Native FAQ and accordion blocks keep content in HTML. Third-party JavaScript components embedded via code blocks may hide text from AI crawlers.

Shopify

Strong defaults on schema and crawl access. Security headers and some structured data are platform-managed.

AI bot access

Shopify's default robots.txt permits all crawlers. The platform manages robots.txt; if you have a custom robots.txt liquid template, verify AI crawlers are not blocked.

Product and Organization schema

Shopify injects Product and Organization schema automatically for product and storefront pages. For additional schema types (HowTo, BlogPosting), use a schema app or manually add JSON-LD via theme code.

Blog and content pages

Shopify's native blog pages support Article-style content. Use the blog for substantive guides and how-to content. AI tools can crawl and cite well-structured Shopify blog posts.

Security header limitation

Shopify blocks custom HTTP security headers on standard plans. When Stackra flags a security header gap on Shopify, it is pointing at a platform ceiling, not something you can add without moving to Shopify Plus.

See also: WordPress platform guide · Shopify platform guide · Wix platform guide

Five mistakes that hurt GEO readiness

Confusing AI training access with search indexing

Blocking AI training and blocking search indexing are different choices. Google-Extended controls whether Google can use your content for AI model training; it has no effect on Googlebot or standard search ranking. You can block AI training while keeping full search visibility, or allow both. They are independent.

Accidentally blocking content pages

Disallow: /path/ under User-agent: * blocks every crawler from that path, including AI bots. Blocking system paths like /wp-admin/ or /checkout/ is correct and expected. Blocking /blog/, /services/, or /about/ is a crawlability problem. Platforms generate the right path blocks automatically; the risk is custom additions made without realizing the scope.

Click-to-reveal content invisible to AI crawlers

FAQ sections and tabs that only show text after a JavaScript click event are invisible to AI fetchers. Use native HTML <details>/<summary> elements, or ensure the content is present in the initial HTML.

Schema present but mistyped

Subtypes like Restaurant or MedicalClinic are valid LocalBusiness subtypes, but an exact Organization or LocalBusiness type is what Stackra counts for entity presence. Validate your schema output with Google's Rich Results Test.

Entity information incomplete

Organization schema with only a name and URL leaves location and authorship unconfirmed. Each additional confirmed signal (location, named person) raises entity confidence. High confidence is the target for clear AI attribution.

Ignoring sitemap submission

Your sitemap lets AI crawlers discover pages they may not find through link crawling alone. Submit yours to Google Search Console and confirm it is referenced in your robots.txt. WordPress (5.5+), Wix, Shopify, and Squarespace generate sitemaps automatically; custom-built sites and some older setups do not.

Priority order for GEO readiness

Confirm your sitemap exists and is submitted in Google Search Console

WordPress (5.5+), Wix, Shopify, and Squarespace generate one automatically. Custom-built sites need manual creation.

Verify major AI crawlers are allowed in your robots.txt

GPTBot, OAI-SearchBot, ClaudeBot, and Google-Extended. Name them explicitly if possible.

Complete your business name, location, and description in your platform settings

WordPress (Rank Math Knowledge Graph), Wix (Settings > Business Info), Squarespace (Settings > Business Information). These auto-generate Organization or LocalBusiness schema.

Add Article or BlogPosting schema to content pages

Rank Math and Yoast do this automatically on WordPress. Wix applies it to blog posts natively. This is the primary citability signal.

Add HowTo schema to any step-by-step guides or service pages

Highest citability signal. Rank Math, Wix Structured Data tool, or Squarespace code injection.

Check that FAQ sections use HTML, not JavaScript click-reveal

Content that only appears after a user click is invisible to AI fetchers. Use native details/summary elements.

Run a Stackra scan to see your current GEO signal evidence

Bot access per crawler, schema detected, entity confidence level, and supporting signal status, all in one view.

How to rank in AI Overviews

An AI Overview is Google's AI-generated summary that appears at the top of a Google Search results page on many informational queries, with citations to the source pages underneath. To be cited in an AI Overview, a page must be indexed by Google, accessible to Google-Extended in robots.txt, and structured to answer a specific question clearly enough that the AI can lift a sentence directly. The seven actions below are the concrete steps that move an existing page into AI Overview citation eligibility.

Allow Google-Extended in robots.txt

Google's AI training and Gemini crawler. If blocked, the page is excluded from AI Overview generation entirely. This is separate from Googlebot, which continues to handle classic search indexing.

Put a 60 to 80 word definition-style answer at the top of the page

AI Overviews lift the most concise, definition-style answer they can find. A clear short paragraph that directly answers the page's target question is the most-cited pattern.

Add FAQPage schema for any Q&A sections on the page

FAQPage schema makes Q&A pairs directly liftable. The AI can quote a question and answer almost verbatim into the Overview, with attribution to your URL.

Use specific named entities (products, people, places, tools) rather than generic terms

AI Overviews favor pages that name the same entities as the user's query. "Stackra audits 11 named AI crawlers" is more citable than "our tool checks AI bots".

Answer the questions Google lists under "People also ask" for your target query

Each related question answered on your page is a separate citation opportunity. One page can be cited in several different Overviews this way.

Confirm the page loads quickly and is mobile-readable

Google has stated Core Web Vitals and mobile usability are inputs to AI Overview source selection. Slow or broken pages get filtered out before AI generation.

Update both the visible date and the schema dateModified when content materially changes

AI Overviews tend to favor pages with recent freshness signals on time-sensitive topics. Update the schema's dateModified field, not just the displayed date.

AI Overview indexing is not instant. After updating a page, expect 1 to 7 days for Google to re-crawl an established site, then 2 to 6 weeks before the page typically appears in AI Overviews on its target queries.

More on Generative Engine Optimization

Background, deep-dives, and platform-specific guidance for being cited by AI search.

What Is GEO? Generative Engine Optimization Explained

The core concept, the AI crawlers involved, and the four signal groups that determine GEO readiness.

What Stackra Detects for GEO, and Why It Is Different

Per-bot robots.txt parsing, schema by function, entity confidence, and supporting infrastructure.

GEO for Small Businesses: What You Can Actually Do

Platform-specific actions for WordPress, Wix, and Squarespace owners.

Why Doesn't AI Mention My Business?

The real reasons AI tools omit a business, and the three things that actually fix it.

How We Optimized Stackra for GEO: A Before and After

The exact four changes we made to move every GEO signal from absent to confirmed.

See your GEO signals in under five minutes

Enter your URL and Stackra checks all four signal groups: bot access per crawler, schema by function, entity clarity confidence, and infrastructure readiness.